Posted on April 20, 2016

This is a problem which has come up in my research, and I'm documenting it, as I think it's a pretty interesting problem. (Not just because I'll forget why I did this if I don't write about it, no not at all :P)

This is a problem that arose from my research, so it's unfortunately not removed from context. The best I can do is give you a rundown on the moving pieces before I use them.

I've been working on a formalization of [Featherweight Java][FJ], which is what you get when you strip down Java till you hit lambda calculus. It's actually probably closer to lambda calculus, replacing lambdas with methods on objects.

// Two basic classes. We don't sully ourselves with Ints or other base types.

class A extends Object {

A() { super(); }

}

class B extends Object {

B() { super(); }

}

class Pair extends Object {

Object fst;

Object snd;

// Constructors are always trivial,

// and just set each field to the matching parameter.

Pair(Object fst, Object snd) {

super(); this.fst=fst; this.snd=snd;

}

// Every method is just an single expression.

// We also eschew mutation, always constructing a new object instead.

Pair setfst(Object newfst) {

return new Pair(newfst, this.snd);

}

}

But most of my work as been in Coq, trying to represent this and then prove things about it.

For this post, we focus almost exclusively on the Class Table, which is represented as a list [(class, (parent_class, fields, methods)]. So for this example, one representation of the class table would be

Definition example_CT := [

(Pair, (Object, [fst, snd], [constr_Pair, setfst])),

(B, (Object, [], [constr_A])),

(A, (Object, [], [constr_A]))]

where I don't actually care about how you represent individual methods or fields.

Note that we could have swapped the rows for A and B and it wouldn't really have mattered. It would make no sense to talk about classes that aren't in the class table (or are Object), so we have a predicate ok_type_ CT C which just says that either C is in CT, or C = Object.

We do want to enforce that you can't make loops like

[ (C, (D, fs1, ms1)), (D, (C, fs2, ms2)) ]

as we want every chain of inheritance to terminate in Object at some point. We also want to rule out redefining a class:

[ (C, (D, fs1, ms1)), (C, (E, fs2, ms2)) ]

I call this constraint directed_ct, and it's defined inductively for the class table list.

Inductive directed_ct : ctable -> Prop :=

| directed_ct_nil : directed_ct nil

| directed_ct_cons :

forall (C D : cname) (fs : flds) (ms: mths) (ct : ctable),

directed_ct ct ->

C \notin (keys ct) -> (* No duplicate bindings *)

ok_type_ ct D -> (* No forward references *)

directed_ct ((C, (D, fs, ms)) :: ct).

We have a few utility functions for talking about indexing into the class table, notable binds, where we say binds C (D, fs, ms) CT to mean there exists an entry like (C, (D, fs, ms)) in CT. extends_ is a way to say this without naming fs and ms directly.

Sub Class.

Now that the preliminaries are covered, I'm going to show two different definitions for a strict subclassing relation.

Inductive ssub_p (CT:ctable) : typ -> typ -> Prop :=

| ssub_p_trans : forall A B C,

ssub_p CT A B ->

ssub_p CT B C ->

ssub_p CT A C

| ssub_p_extends : forall C D, extends_ CT C D -> ssub_p CT C D.

Inductive ssub_ : ctable -> typ -> typ -> Prop :=

| ssub_trans : forall CT A B C,

ssub_ CT A B ->

ssub_ CT B C ->

ssub_ CT A C

| ssub_extends : forall CT C D, extends_ CT C D -> ssub_ CT C D.

These are almost the same, but ssub_ is said to index over CT, where are ssub_p is parametric in the choice of CT.

I found this Stack Overflow answer to be quite helpful. Let me just quote a little from it:

Parameters are merely indicative that the type is somewhat generic, and behaves parametrically with regards to the argument supplied.

What this means for instance, is that the type List T will have the same shapes regardless of which T you consider: nil, cons t0 nil, cons t1 (cons t2 nil), etc. The choice of T only affects which values can be plugged for t0, t1, t2.

Indices on the other hand may affect which inhabitants you may find in the type! That's why we say they index a family of types, that is, each indice tells you which type in the family you are looking at (in that sense, a parameter is a degenerate case where all the indices point to the same family).

For instance, the type family Fin n or finite sets of size n contains very different structures depending on your choice of n.

The index 0 indices an empty set. The index 1 indices a set with one element.

In that sense, the knowledge of the value of the index may carry important information! Usually, you can learn which constructors may or may not have been used by looking at an index. That's how pattern-matching in dependently-typed languages can eliminate non-feasible patterns, and extract information out of the triggering of a pattern.

This still didn't fully clear this up for me, I needed to look at the induction schemes for each to really get it. If you haven't looked at the generated induction schemes for inductive types yet, I would recommend checking out the Certified Programming with Dependent Types chapter on this first.

ssub_p_ind

: forall (CT : ctable) (P : typ -> typ -> Prop),

(forall A B C : typ, (* trans *)

ssub_p CT A B -> P A B ->

ssub_p CT B C -> P B C ->

P A C) ->

(forall C D : cname, (* extends *)

extends_ CT C D -> P C D) ->

forall C D : typ,

ssub_p CT C D -> P C D

ssub__ind

: forall P : ctable -> typ -> typ -> Prop,

(forall (CT : ctable) (A B C : typ), (* trans *)

ssub_ CT A B -> P CT A B ->

ssub_ CT B C -> P CT B C ->

P CT A C) ->

(forall (CT : ctable) (C D : cname), (* extends *)

extends_ CT C D -> P CT C D) ->

forall (CT : ctable) (C D : typ),

ssub_ CT C D -> P CT C D

I know that's a big block of code to look at, but it's the differences I want to highlight. Lets just look at the conclusions of each. For ssub_p_ind we get that forall C D, ssub_p CT C D -> P C D, but for ssub__ind, we get forall CT C D, ssub_ CT C D -> P CT C D.

Same with the two cases for transitivity and direct extension, ssub__ind always does a forall CT, where as ssub_p_ind has just that one forall CT at the very start.

I know that ssub__ind is more general, as I was able to prove ssub_p_ind given ssub__ind, by specializing the inductive cases to the choice of CT, but I was not able to prove the other direction. I pose that it is impossible, but I am happy to hear any counterexamples.

This seems like good news, we can just do all our work with ssub__ind, and everything will work out, as it's stronger. However, in practice, Coq runs into some trouble if you try and do this. Here's a lemmma that's much easier to solve with ssub_p_ind.

Lemma no_ssub_with_empty_table C D

(H_sub: ssub_ nil C D)

:

False.

This simply states that if you haven't declared any more classes than Object, you can't have any strict subclassing relationships. Using the parametric induction scheme:

Proof.

induction H_sub using ssub_p_ind.

- (* The inductive hypothesis is immediately false, easy *)

exact IHH_sub1.

- (* We get a term H : extends_ nil C D, which unfold to

exists fs ms, (C, (D, fs, ms)) \in nil. which is another easy contradiction. *)

unfold extends_.

auto.

Qed.

It's quite trivial, as in both cases we get easy contradictions. However, if we try it with the other induction scheme, we get

Proof.

induction H_sub.

- (* Still get a False hypothesis *)

exact IHH_sub1.

- (* But now we get H : extends_ CT C D, which doens't give a contradiction

to us at all *)

Abort.

What went wrong? Well, remember that we are trying to fill in an argument for ssub__ind. Lets take another look at the definition for the extends case:

...

(forall (CT : ctable) (C D : cname), (* extends *)

extends_ CT C D -> P CT C D) ->

...

That's right, we have to show that this holds for all such CT. This severely cramps our style. Lets see if there's a way to force it to work anyways, because why not. We are going to start with refine instead, and be super explicit.

(* Exactly the same as above *)

Proof.

refine (ssub__ind

(* P *) (fun CT C D => False)

(* H_trans *) _

(* H_extends *) _

nil C D H_sub).

-

(* goal :

forall (CT : ctable) (t1 t2 t3 : typ), ssub_ CT t1 t2 -> False -> ssub_ CT t2 t3 -> False -> False

*)

auto. (* False -> False, easy *)

-

(*

forall (CT : ctable) (t1 t2 : cname), extends_ CT t1 t2 -> False

*)

(* still screwed! *)

Abort.

Now that we see what we've done, lets try and do something more clever. Lets add a condition to P that CT = nil, so in the second case we only have to prove:

forall (CT : ctable) (t1 t2 : cname), extends_ CT t1 t2 -> CT = nil -> False.

That seems much more reasonable. Lets try it!

Proof.

intros H_sub.

refine (ssub__ind

(* P *) (fun CT C D => CT = nil -> False) (* added that *)

(* H_trans *) _

(* H_extends *) _

nil C D H_sub eq_refl). (* We also had to add the term (eq_refl: nil = nil) *)

- auto. (* still trivial *)

- (* forall (CT : ctable) (t1 t2 : cname), extends_ CT t1 t2 -> CT = nil -> False *)

clear.

intros CT C D H_extends H_eq.

(* We have

H_extends : extends_ CT C D

H_eq : CT = nil

*)

rewrite H_eq in H_extends.

(* H_extends: extends_ nil C D *)

(* we are in the same place as above, easy. *)

unfold extends_.

auto.

Qed.

I think this counts as an application of the convoy pattern, as seen here. (Speaking of that site, I would love to have overlays for proofs like that blog, and have some ideas on how to generate it automatically. But work comes first.)

Now, let me show you where this falls apart.

Lemma strengthen_ssub (CT:ctable) C D A B ms fs

(H_dir: directed_ct ((A, (B, fs, ms)) :: CT))

(H_ok_C_s: ok_type_ ((A, (B, fs, ms)) :: CT) C)

(H_noobj: Object \notin dom ((A, (B, fs, ms)) :: CT))

(H_neq: A <> C)

(H_sub: ssub_ CT C D)

(H_ok_D: ok_type_ CT D)

: ssub_ ((A, (B, fs, ms)) :: CT) C D.

This induction cannot be proven with ssub_p, we need to have the choice of CT be more determined. I think. I tried pretty hard, and even asked in the irc channel to try and solve it with the parametric way. But I always ended up with new fresh variables getting generated for C and D, which prevented me from ruling out that they were A and B.

Here's a snapshot of it failing.

But I did manage to prove it using ssub__ind, though it also required passing the hypothesis directly.

Here's a lemma for the symmetric case:

Lemma strengthen_ssub_case_2 (CT : ctable)

(C : cname) (D : cname)

(A : cname) (B : cname)

(E : cname) (F : cname)

(ms1 : mths) (fs1 : flds)

(fs2 : flds) (ms2 : mths)

: ssub_ CT C D ->

directed_ct CT ->

Object \notin dom CT ->

A <> C ->

E <> C ->

A \notin keys CT ->

E \notin keys CT ->

ssub_ ((A, (B, fs1, ms1)) :: (E, (F, fs2, ms2)) :: CT) C D.

I feel conflicted about naming these. I've mostly use C and D as the classes that show up in the final statement, but G and H don't sound like class names as much as A and B do, so I don't use 4 consecutive letters for the secondary class names. Bleh.

Proof.

refine ((ssub__ind

(* P *) (fun CT X Y => forall

(H_dir: directed_ct CT)

(H_noobj: Object \notin dom CT)

(H_neq1: A <> X)

(H_neq2: E <> X)

(H_notin1: A \notin keys CT)

(H_notin2: E \notin keys CT ),

ssub_ ((A, (B, fs1, ms1))::(E, (F, fs2, ms2))::CT) X Y)

(* H_Trans *) _

(* H_Extend *) _)

CT C D).

I left the hypothesis to the lemma as implications rather than naming then, as they only get applied to the result of ssub__ind, and would need to be cleared anyways. I could rearrange the order and put a forall CT C D, to avoid feeding those arguments to ssub__ind and clearing them afterwards, but I think it's better to name them.

- (* trans *)

clear CT C D.

intros CT t1 t2 t3.

intros H_sub_1 IHH_sub_1 H_sub_2 IHH_sub_2.

intros. (* as named above in P. *)

apply ssub_trans with (t2:=t2).

+

apply IHH_sub_1; assumption.

+ (* Need A <> t2, E <> t2 *)

assert (t2 \in keys CT). {

apply ssub_child_in_table with (D := t3); assumption.

}

assert (A <> t2). {

destruct (A == t2).

subst.

contradiction.

auto.

}

assert (E <> t2). {

destruct (E == t2).

subst.

contradiction.

auto.

}

apply IHH_sub_2; assumption.

It's actually easier to prove the transitivity case when you have fewer hypothesis for P. I started out with P just concluding ssub_ ((A, (B, fs1, ms1))::(E, (F, fs2, ms2))::CT) X Y, and the transitivity case was just apply ssub_trans; auto, but I required that A <> C and E <> C for the extends_ case. But when I added those, then I had to prove them for that middle class introduced by transitivity, which I knew little about.

To show that A <> t2, I noted that t2 has to be in the class table somewhere, as it is a subclass, while I know that A and E and not in the rest of the class table, as they are at the front.

- (* extends *)

clear dependent CT;

clear dependent C;

clear dependent D.

intros CT C D H_extends.

intros.

unfold_extends H_extends.

apply ssub_extends.

unfold extends_.

exists fs0, ms0.

auto.

Qed.

And extends is just based on looking up C in the table, which doesn't change when we add in two different entries in front of it.

This proof had me stumped for quite a while before I explicitly wrote out P, and manually did the induction.

So sometimes you need the additional generality of index types, however the extra generality might cause Coq to do a worse job when using the induction tactic, so you shouldn't just blindly default to using index types when your datastructure really is fully parametric.

]]>

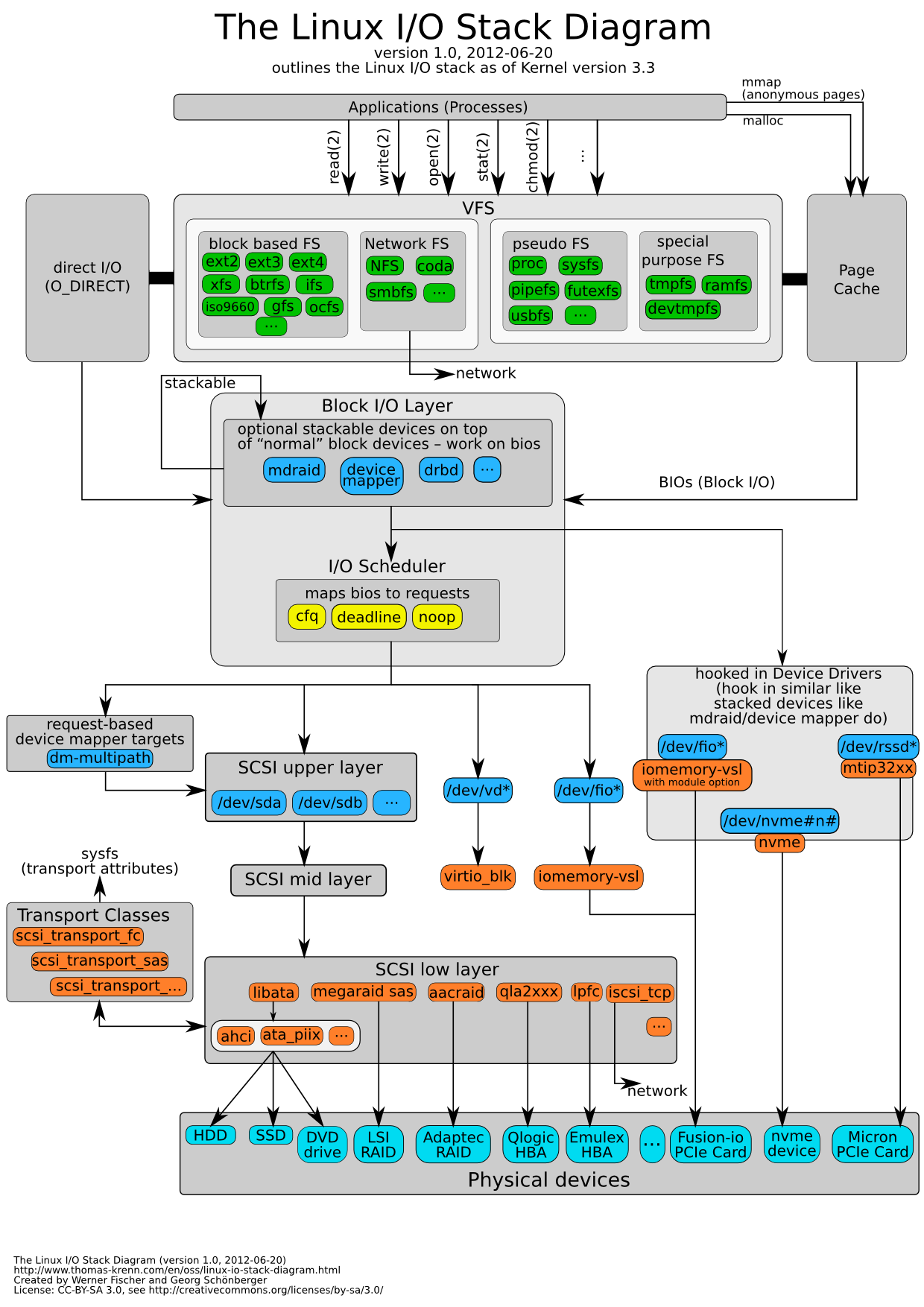

Which I took from thomas-krenn.com, created by Werner Fischer.

Which I took from thomas-krenn.com, created by Werner Fischer.